Talks

Concepts in Modern Bayesian Experimental Design (Corcoran Memorial Prize)

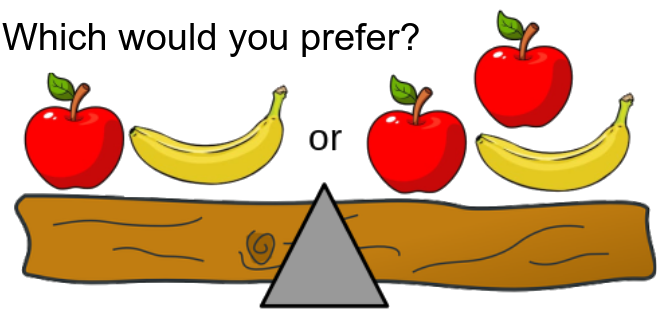

I was truly grateful to be awarded the 2022 Corcoran Memorial Prize in recognition of an outstanding DPhil thesis. The Corcoran Memorial lectures are named in memory of Stephen Corcoran who was a graduate student in the Department of Statistics at the University of Oxford until his death in 1996. A family bequest has established an annual lecture in honour of Stephen in which distinguished guest lecturers are invited to deliver a lecture on important aspects of their work, in my case, Professor Michael Gutmann from the School of Informatics, University of Edinburgh gave a talk on ‘Self-supervised learning for Bayesian experimental design’. I then delivered a talk on part of my DPhil work, entitled ‘Concepts in Modern Bayesian Experimental Design’. My lecture begins with a Bayesian viewpoint on the “weighing prisoners on an island” puzzle which appeared, among other places, on the show Brooklyn 99.

ICML 2022 Long Presentation: Contrastive Mixture of Posteriors

This is the long presentation that I delivered at ICML 2022 for the paper Contrastive Mixture of Posteriors for Counterfactual Inference, Data Integration and Fairness. The project was born out of several “grand challenges” in computational biology and the analysis of single-cell RNA-seq data. One challenge is integrating different datasets that exhibit some kind of technical variation. Another is predicting the effects of drugs and gene knock-outs on certain cells. In this project, we were able to translate these problems into a representation learning setting and then focus on computational methods to enforce the all-important contraint: z independent of c.

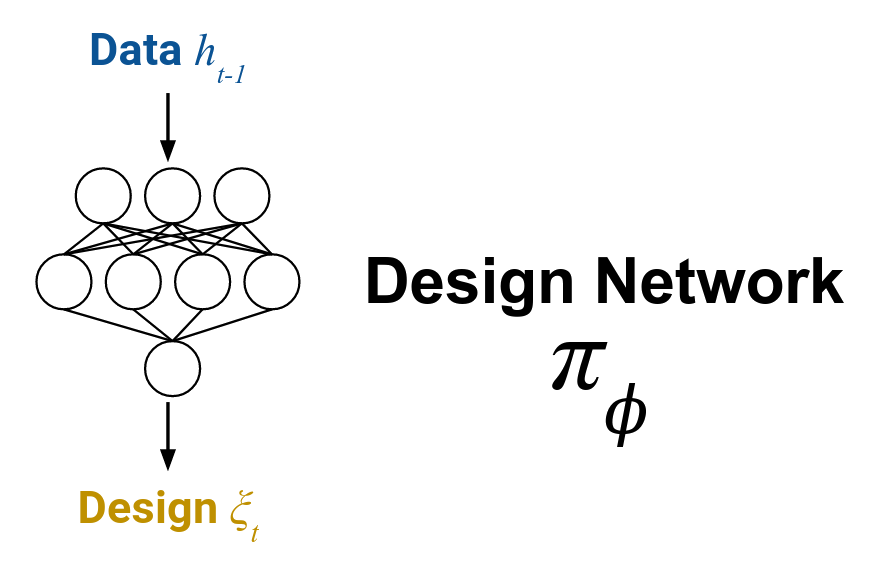

ICML 2021 Long Presentation: Deep Adaptive Design

This is the long presentation that I delivered with Desi Ivanova at ICML 2021 for the paper Deep Adaptive Design: Amortizing Sequential Bayesian Experimental Design. It offers a 25 minute introduction to the DAD method of training a design policy for fast, adaptive experimental design.

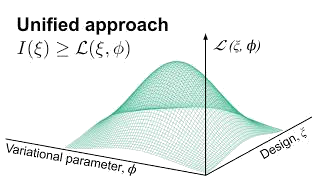

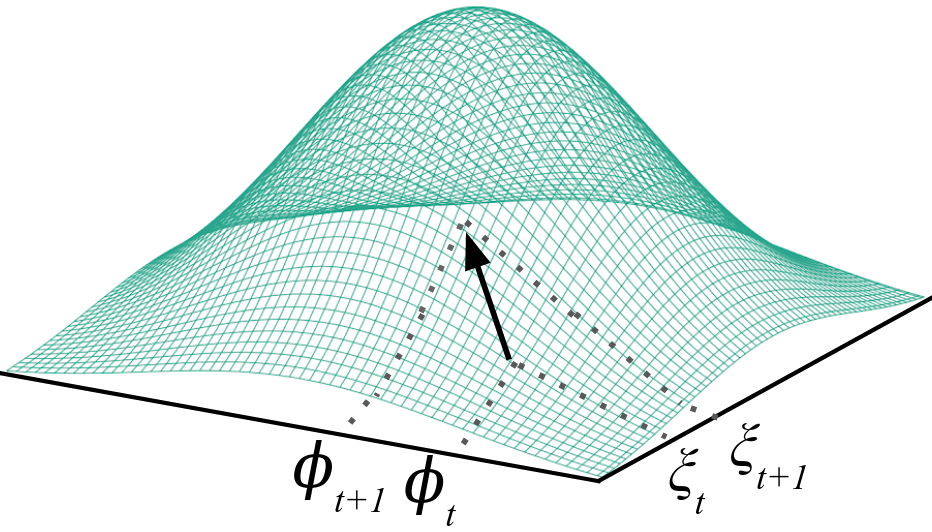

Minisymposium on Model-Based Optimal Experimental Design SIAM CSE 21: Stochastic Gradient BOED

This is the talk, based on our paper A Unified Stochastic Gradient Approach to Designing Bayesian-Optimal Experiments that I delivered as an invited speaker at the Minisymposium on Model-Based Optimal Experimental Design at SIAM CSE 21. In the talk, I cover the basics of experimental design with Expected Information Gain (EIG), and then turn to the question of how to efficiently optimize this quantity over a large continuous design space without resorting to inefficient methods like Bayes Opt.

AISTATS 2020: A Unified Stochastic Gradient Approach to Designing Bayesian-Optimal Experiments

This is the talk that I delivered to AISTATS 2020 for the paper A Unified Stochastic Gradient Approach to Designing Bayesian-Optimal Experiments. The talk offers a short primer on Bayesian Experimental Design, before launching into the key problem of optimizing Expected Information Gain using stochastic gradient methods.

NeurIPS 2019 Spotlight: Variational Bayesian Optimal Experimental Design

This is the spotlight talk that I delivered at NeurIPS 2019 for the paper Variational Bayesian Optimal Experimental Design (my talk starts at 14:18). It offers a 5 minute overview of Bayesian Experimental Design and variational methods for estimating Expected Information Gain.